Disclaimer

This guide is for research purposes only. Running a Render Network node may involve hardware costs, electricity costs, uptime requirements, software maintenance, wallet setup, and exposure to RENDER token price volatility. Operator approval, workload assignment, job utilization, and rewards are not guaranteed. Do not buy new hardware based only on illustrative reward examples. Use your own electricity rate, hardware load, location, and expected uptime before deciding whether to apply.

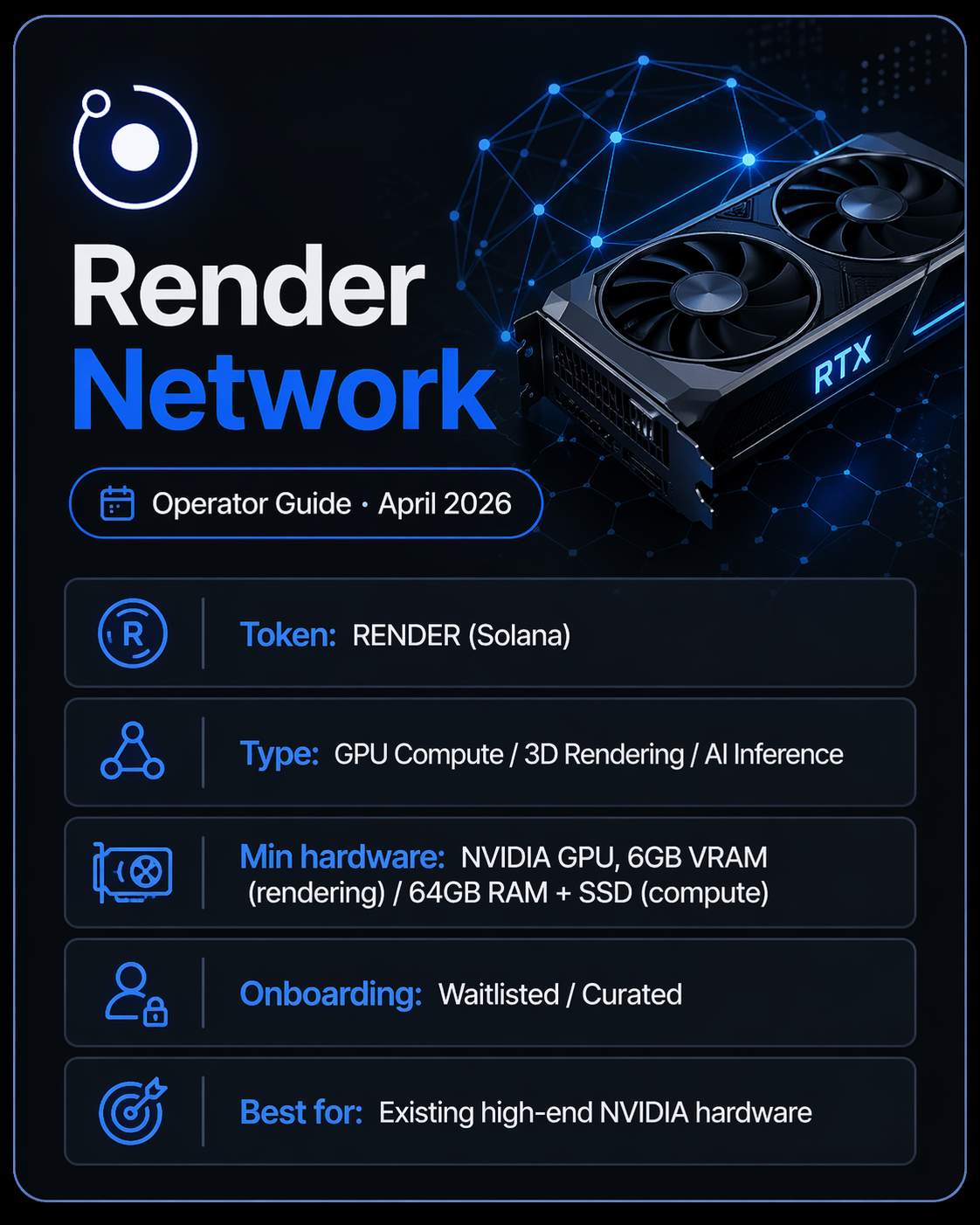

| Token | RENDER (Solana) |

| Network Type | GPU Compute / 3D Rendering / AI Inference |

| Min Hardware | NVIDIA GPU, 6GB VRAM (rendering) / 64GB RAM + SSD (compute — verify current FAQ) |

| Onboarding | Waitlisted / Curated |

| Best For | Operators who already own high-end NVIDIA hardware |

Introduction

This guide is for GPU owners, homelab operators, and small infrastructure operators asking whether running a Render Network node is realistic in 2026. It covers access, hardware, setup, rewards, costs, and risks, drawn from official documentation, governance proposals, and publicly available operator discussions. Enterprise deployments and large-scale GPU farms are out of scope.

One question, answered plainly: can a home operator with existing NVIDIA hardware participate in Render Network, and is it worth the effort?

What Is Render Network?

Render Network is a decentralized GPU compute marketplace. Creators and compute clients submit jobs, pay in RENDER, and node operators run those jobs on their GPUs. The network started with OctaneRender-based 3D rendering and has since expanded into AI inference and general compute through its Dispersed subnet. It runs on Solana. Operators earn RENDER through a Burn-and-Mint Equilibrium model: creators burn RENDER when paying for work, and new RENDER is minted each epoch and distributed to operators based on availability and completed jobs.

Can You Run a Render Network Node in 2026?

Possibly, if you already own suitable hardware and accept that access is not guaranteed.

Render is not plug-and-play. There is no open enrollment. Whether you can participate depends on which subnet you apply for, what hardware you have, where you are located, and when cohorts happen to open.

Three paths exist for operators right now:

- Core rendering subnet. The original network, handling OctaneRender, Blender, and Cinema 4D jobs. Onboarding has been intermittently limited. Community discussions report waitlists stretching a year or more. The official interest form is still active.

- Dispersed compute subnet. The newer AI and general compute path, launched via RNP-019. Early cohorts have focused on US-based operators with high-end hardware. Formal waitlist at waitlist.renderfoundation.com.

- Salad subnet. Approved via RNP-023. Salad's distributed GPU network (roughly 60,000 daily active machines across 180+ countries) is being integrated as an exclusive subnet, with payments settling in RENDER. Operators already on Salad gain access to Render workloads through this integration. No separate external onboarding path under Render's documentation existed at time of writing.

If you don't own eligible hardware yet, the official Dispersed FAQ is blunt: "This is a call for existing hardware. We strongly advise against purchasing new hardware." That warning applies across all three paths.

Operator Access and Onboarding

Core rendering nodes: Fill out the interest form at renderfoundation.com/gpu. If approved, the Render team sends the node client and setup instructions by email. Onboarding is curated and inconsistent. Community reports suggest available slots open irregularly with no published timeline.

Dispersed compute nodes: Apply at waitlist.renderfoundation.com. The form requires hardware inventory, benchmark results, and a speed test. Cohort selection is based on hardware tier and region. Early cohorts have prioritized US-based applicants. Acceptance is not guaranteed regardless of hardware quality.

Salad subnet: If you are already on Salad's network, you will gain access to Render workloads as the integration goes live. For everyone else, check the latest Foundation announcements. A formal external onboarding route through the Salad path had not been documented at time of writing.

One practical note: If you already own eligible hardware, apply now. Queues are long, submitting costs nothing, and you can always decline if circumstances change before a slot opens.

Hardware Requirements

Rendering Nodes (OctaneRender / Blender / Cinema 4D)

| Requirement | Official Minimum | Community Recommendation |

|---|---|---|

| GPU | NVIDIA CUDA, 6GB VRAM | RTX 4090 or newer, 24GB VRAM |

| System RAM | 32GB | 64–128GB |

| Storage | 100GB fast SSD | 1–2TB NVMe SSD |

| OS | Windows 10/11 | Windows 10/11 (latest) |

| Driver | 566.36 or higher | Latest stable NVIDIA driver |

| Internet | Stable connection | 300 Mbps down / 100 Mbps up (community-reported, not official) |

Higher VRAM means more job eligibility. The official docs call 8GB+ preferred, and faster storage has a real impact on performance.

Dispersed Compute Nodes

| Requirement | Value |

|---|---|

| GPU | Compute score at or above RTX 3050 baseline, approved up to RTX 5090 |

| System RAM | 64GB minimum |

| Storage | Verify current waitlist FAQ — sources reference both 1TB and 2TB NVMe SSD |

| OS | Ubuntu 22.04 or 24.04 (native Linux recommended) |

| Software | Docker, NVIDIA Container Toolkit |

| Internet | 100 Mbps down / 75 Mbps up (official minimum) |

Storage figures vary across source documents. Confirm against the current waitlist FAQ and RNP-019 before provisioning anything. Running WSL instead of native Linux may hurt compatibility and acceptance odds.

Software and Account Requirements

Rendering nodes: Windows 10/11, NVIDIA driver 566.36 or higher, and an Ethereum or Solana-compatible wallet. The wallet address must match what you submitted on the application. The node client arrives by email after approval.

Dispersed compute nodes: Ubuntu 22.04 or 24.04, Docker, NVIDIA Container Toolkit, and a Solana-compatible wallet for reward deposits. The application includes running a benchmark tool and uploading the results.

Step-by-Step Setup Overview

Exact steps depend on which subnet you apply for and what the onboarding materials say at the time you apply. This is a high-level process only.

- Check current eligibility. Review the waitlist FAQ and RNP-019 for Dispersed, or the interest form page for rendering nodes. Hardware requirements can change. Verify now, not just when you first read this.

- Model your costs before applying. Work out your electricity rate, expected power draw, and whether the illustrative reward figures would cover your costs at current token prices. Use 20–30% job utilization for conservative modeling, not the official 50% example.

- Apply or join the relevant waitlist. Rendering nodes: renderfoundation.com/gpu. Dispersed: waitlist.renderfoundation.com.

- Wait for approval. No timeline is published. Follow the Foundation's Discord and announcements for cohort updates.

- Install required software once accepted. The team sends the node client and setup instructions by email.

- Benchmark and configure. Connect your wallet, point your temp folder at your fastest drive, and make sure drivers and software are current.

- Monitor from day one. Use the Render Network dashboard alongside your own records to track epoch rewards, uptime, and running costs.

Expected Workloads

Rendering nodes handle frame-based jobs from artists and studios using OctaneRender, Blender, and Cinema 4D. Job assignment is based on hardware score and node reputation. Completing jobs cleanly builds reputation. Failing to complete them reduces it.

Dispersed compute nodes run AI inference, model training, and general compute workloads. Render has expanded into this area through the Dispersed subnet and the Salad integration. Expanding into AI compute does not mean steady job flow for any individual node.

Community reports suggest job volume varies a lot by node, cohort, hardware tier, and time period. Some epochs see very low total compute paid out across the whole network.

Rewards, Availability Incentives, and Payouts

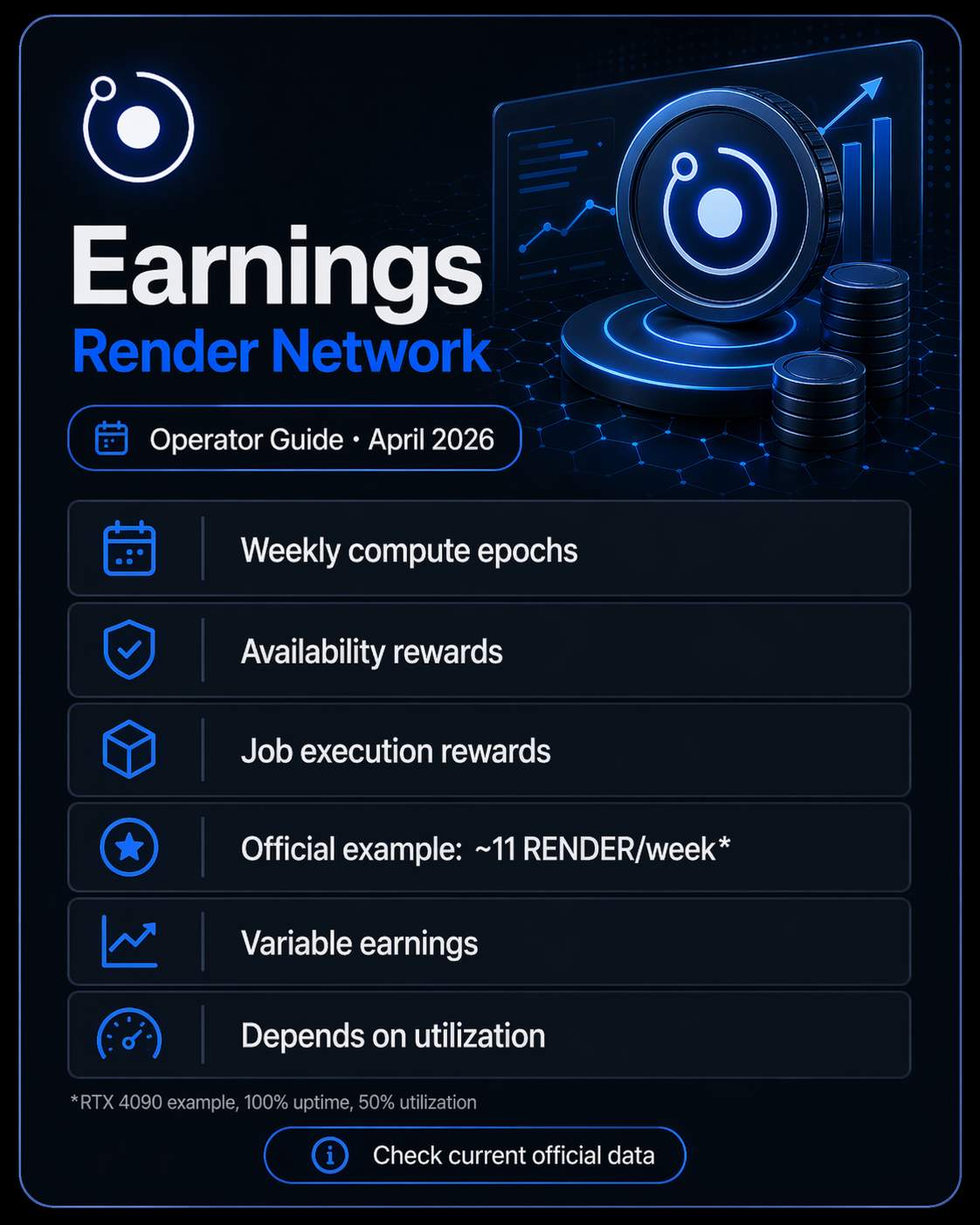

Two main earning channels: availability rewards for being online, and job execution rewards for work completed.

For Dispersed, the official "Understanding Rewards" documentation describes weekly Compute Epochs with three components: availability, job execution, and preparation (setup work including downloads and pre-processing).

The documentation gives this illustrative example for an RTX 4090-class node running at 100% uptime and 50% job utilization: roughly 6 RENDER for availability, 5 RENDER for job execution, and 0.05 RENDER for preparation. About 11 RENDER per week total.

That is an official example scenario. It is not a typical earnings forecast. Real rewards depend on approval status, cohort assignment, actual utilization, epoch parameters, and whatever incentive programs are active. Community reports suggest real fill rates are frequently lower than the 50% figure in the example. When running your own numbers, start with 20–30% utilization, not 50%.

On payouts: Some operator discussions have flagged payout timing issues and manual review friction. A Foundation representative has confirmed that reward distribution, while largely automated, still requires a manual sign-off each epoch. These are operator-reported concerns, not confirmed universal conditions. Worth knowing if you depend on weekly payments arriving on schedule.

For current, unfiltered operator intelligence on job volume and payout timing, the Render Network Discord is more useful than X. The node-revenue and report-issues channels are where the honest feedback lives.

Costs and Profitability Considerations

A full workstation running an RTX 4090 under sustained load draws roughly 700–800W from the wall. Running continuously at those levels, electricity is a real cost.

| Region | Approximate Rate |

|---|---|

| US residential average | ~$0.13 per kWh |

| EU average | ~$0.25–0.30 per kWh |

| Southeast Asia | ~$0.08–0.12 per kWh |

At EU rates, a continuously loaded system in that range costs roughly $125–180 per month in electricity alone. Whether illustrative reward figures cover that comes down to actual utilization, current token price, and hardware efficiency. None of those are fixed.

Build your own spreadsheet: local electricity rate, conservative utilization assumption, actual measured power draw, current RENDER price. Do not use numbers from this guide to justify a hardware purchase.

Other costs worth accounting for: hardware depreciation, cooling, internet bandwidth, and the ongoing time cost of software maintenance and monitoring.

Risks and Limitations

| Risk | Notes |

|---|---|

| Onboarding not guaranteed | Waitlists are curated. Applying does not mean you get in. |

| Hardware mismatch | Mid-tier GPUs may be less competitive for Dispersed cohorts. Check current criteria first. |

| Low utilization | Community reports suggest fill rates vary widely. The official 50% example is not a baseline. |

| Payout delays | Operator-reported concerns about manual review and delayed epoch payments. |

| Token price volatility | All rewards are in RENDER. The USD value moves with the token. |

| Governance changes | Reward parameters and emissions are set by governance proposals. RNP-022 covers Year 3, but rules can change. |

| Regional bias | Early Dispersed cohorts have focused heavily on US-based operators. |

| Hardware purchase risk | The official FAQ explicitly warns against buying hardware to join the waitlist. |

| Storage uncertainty | Source documents show both 1TB and 2TB NVMe SSD for Dispersed. Verify before provisioning. |

Troubleshooting and Common Issues

- Long waitlist, no response. No timeline is published. Follow the Foundation's Discord and official announcements for cohort news.

- Benchmark tool problems. The Dispersed benchmark is a command-line tool, not a GUI. Output goes to the desktop bench_output folder or the home directory depending on your OS.

- Driver or Docker issues. Make sure NVIDIA Container Toolkit is installed correctly on Linux. Native Ubuntu is the safer choice over WSL for Dispersed.

- Low job assignment after approval. Volume varies by period and cohort. Check your epoch rewards against network-level data on the dashboard to understand whether the issue is yours or network-wide.

- Delayed or missing rewards. Start with the official Discord support channels. Keep records of your epoch activity.

- Storage bottlenecks. Temp folder should point to your fastest drive. Slow storage limits the complexity of jobs your node can take on.

Alternatives to Consider

Before committing hardware to Render, it is worth looking at other options in the GPU compute and DePIN space. io.net, Nosana, and Akash Network each have different onboarding models, hardware requirements, and reward structures. What works depends on your specific setup, location, and how much uncertainty you are comfortable with.

Final Assessment

Render Network is one of the more established GPU DePIN networks. It has documented workloads, active governance, and real infrastructure behind it. For operators who already own high-end NVIDIA hardware, have access to low-cost electricity, and can handle onboarding uncertainty and variable earnings, joining the waitlist is a reasonable thing to evaluate. Queues are long, so applying early makes sense. Just go in treating approval and future workload as uncertain rather than assumed.

It is not a source of predictable income. It is not a reason to buy new GPUs. The economics depend on token price, job utilization, cohort selection, and governance decisions. Model your own situation before applying.

Sources

Official

- Render Network Knowledge Base — know.rendernetwork.com

- Render Compute Network Waitlist FAQ — know.rendernetwork.com

- Dispersed "Understanding Rewards" guide (April 2026)

- Render Foundation node operator page — rendernetwork.com/participate-node-operators

Governance / Dashboard

- RNP-019 (Dispersed compute subnet authorization)

- RNP-021 (Dispersed reward framework)

- RNP-022 (Year 3 BME emissions)

- RNP-023 (Salad subnet integration — approved by governance, March/April 2026)

- Render Network Dashboard

Partner / Ecosystem

- Salad Network — subnet integration announcement (SaladCloud Blog)

Community / Operator Reports

- Reddit operator discussions (r/rendernetwork and related GPU monetization threads)

- Render Network Discord — node-revenue and report-issues channels (operator-reported, not verified universal conditions)

Third-Party Context

- Messari — Render Network overview (November 2025)

- CoinMarketCap — token supply data